The Myth of Mythos

On Mythos, Project Glasswing, and Doomerism Marketing

Anthropic announced Project Glasswing on April 7th, a cybersecurity initiative built around an unreleased model called Mythos. Anthropic claims that an unintended consequence of their training and scaling has led to Mythos being unusually capable at vulnerability discovery and exploit generation, and is convinced that the model is too powerful and dangerous to be released to the public.

We’ve heard this narrative before. Μύθος means story in Greek, and it has become clear from the initial reactions that the narrative surrounding Mythos is all over the place both online and in the mainstream media.

AI researchers like Gary Marcus are inclined to believe this is mostly hype. Same narrative from an AI frontier company, different day. We won’t let you use the model, but you should trust the paper we’ve released on it, and you should be thanking us for not releasing this into the wild.

On the other hand, our favorite doomers, like Eliezer Yudkowsky, are calling for you to immediately back up your data onto airgapped hard drives. If even half of what Anthropic is claiming is true, taking extra precautions isn’t just smart, it is the most logical choice. Better safe than sorry, right?

Anthropic’s messaging was clear on this: it is a watershed moment for security. Anthropic is partnering with Apple, Cisco, Google, Microsoft, NVIDIA and others. The top dogs involved in critical software infrastructure get to play with Mythos first (and, just maybe, assist with hardening the defensive perimeter of the internet).

Mythos certainly got our attention. But it feels like the truth lies somewhere in the middle. It isn’t the end of the internet as we know it, but it also isn’t a nothingburger.

Like you, I wanted to separate the signal from the noise on this. So let’s do that.

Why Mythos is Overhyped

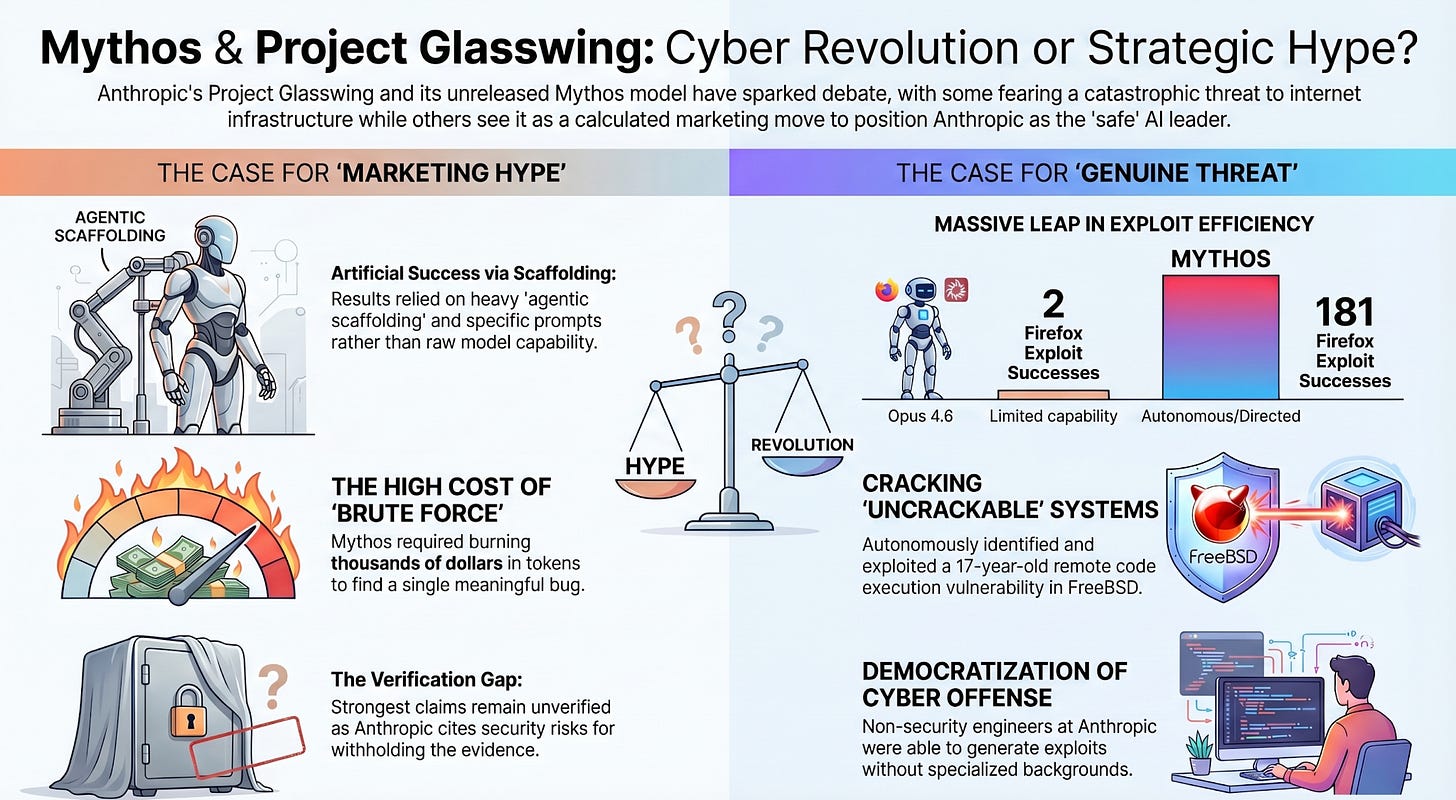

Let’s address first many of the reasons why people think Mythos is overhyped. To start, the public cannot verify the strongest claims. We’re relying on the information from insiders, internal write-ups and media accounts. It can be hard to separate the doomer marketing from the reality.

The flashiest results are selective, and materially easier than they would be in the real world. Anthropic was using agentic scaffolding in a container with source code alongside a prompt that was basically ‘find a security vulnerability in this program’. The data derived from these results was from a model that was heavily tooled, guided, and optimized to maximize success.

There is a difference between proof-of-capability versus real-world operational threat. The Firefox exploit story that circulated is a great example of this. The model was given the best-case scenario for finding exploits, and did not have to deal with typical defense-in-depth mitigations.

Anthropic admits that most of the sensational vulnerabilities and exploits cannot be publicly disclosed, because they haven’t been patched. Again, this is the responsible thing to do, but it creates a situation where the evidence we’re being asked to trust is hidden.

This deep dive is enlightening. As the authors point out, when free and open-weight models are asked to provide a similar analysis, they also found the flagship OpenBSD bug. The OpenBSD vulnerability was one of the strongest and most commonly cited reasons in the media for being seriously alarmed by the abilities of Mythos. But that isn’t the full picture. Anthropic burned thousands of dollars of tokens in a brute-force attempt to find more bugs. A closer headline is this: ‘a very strong model, running at scale inside a carefully designed search scaffold, found a meaningful bug at a nontrivial aggregate cost’.

The other big project that was cited was FFmpeg and the pattern here is the same. Brute force, scaffolding, time, and money. Mythos found vulnerabilities after several hundred runs, and after burning nearly ten thousand dollars. It is still impressive, but it isn’t magic.

A model finding vulnerabilities and exploiting vulnerabilities is not the same thing. After all, we’re well aware of Claude’s constitution, and Anthropic’s models are arguably the most ‘aligned’ models out there. They can still be jailbroken, but they naturally will resist if pushed to engage in nefarious activity.

The conditions under which a model arrives at its results matter. Was it sandboxed? How much was it guided by a person? How long can a model like Mythos run at a normal organization without having the kind of advanced automated in-house scaffolding that Anthropic has built? There are so many variables, and still so many unanswered questions.

Anthropic is trying to convince the public, lawmakers, and investors that it is doing the right thing. That it is the ‘good AI’ company, and nothing like OpenAI. It is trying to convince enterprise organizations that it has the best models for infrastructure and security work, and that its frontier lab is #1. These aims of the business clash with the claims, and extraordinary claims require extraordinary evidence.

The public is not one release away from universal cyber collapse, but it is very clear that current frontier systems can do surprisingly offensive work. Everything needs to be taken with a grain of salt.

So, what about the other side?

Why Mythos is a Big Deal

Mythos can identify and exploit zero-day vulnerabilities across every major operating system and every major web browser when directed. Anthropic backs this up with detailed write-ups.

The OpenBSD example is also worth digging into more:

Anthropic says Mythos identified a vulnerability in OpenBSD’s SACK implementation that could let an attacker crash any OpenBSD host responding over TCP

The company emphasizes that the bug was tied to a 1998 addition of SACK support and exploited subtle interactions around sequence number arithmetic, linked-list hole tracking, and signed integer overflow

OpenBSD is a famously security-focused operating system. This is not trivial.

The economics of vulnerability discovery are changing. What costs 20,000 dollars to run today on a server cluster could cost 500 dollars in a few years on consumer hardware.

I’ve been running Gemma 4 for the last week on my phone and computer, and it is incredible what these local models can do fully offline. Just imagine where we’ll be with local models and their potential capability in a few years. Uncensored and black-hat hacking oriented genAI models will exist, and they will be as easy to download as gaming emulators. The security of the internet is going to have to deal with that.

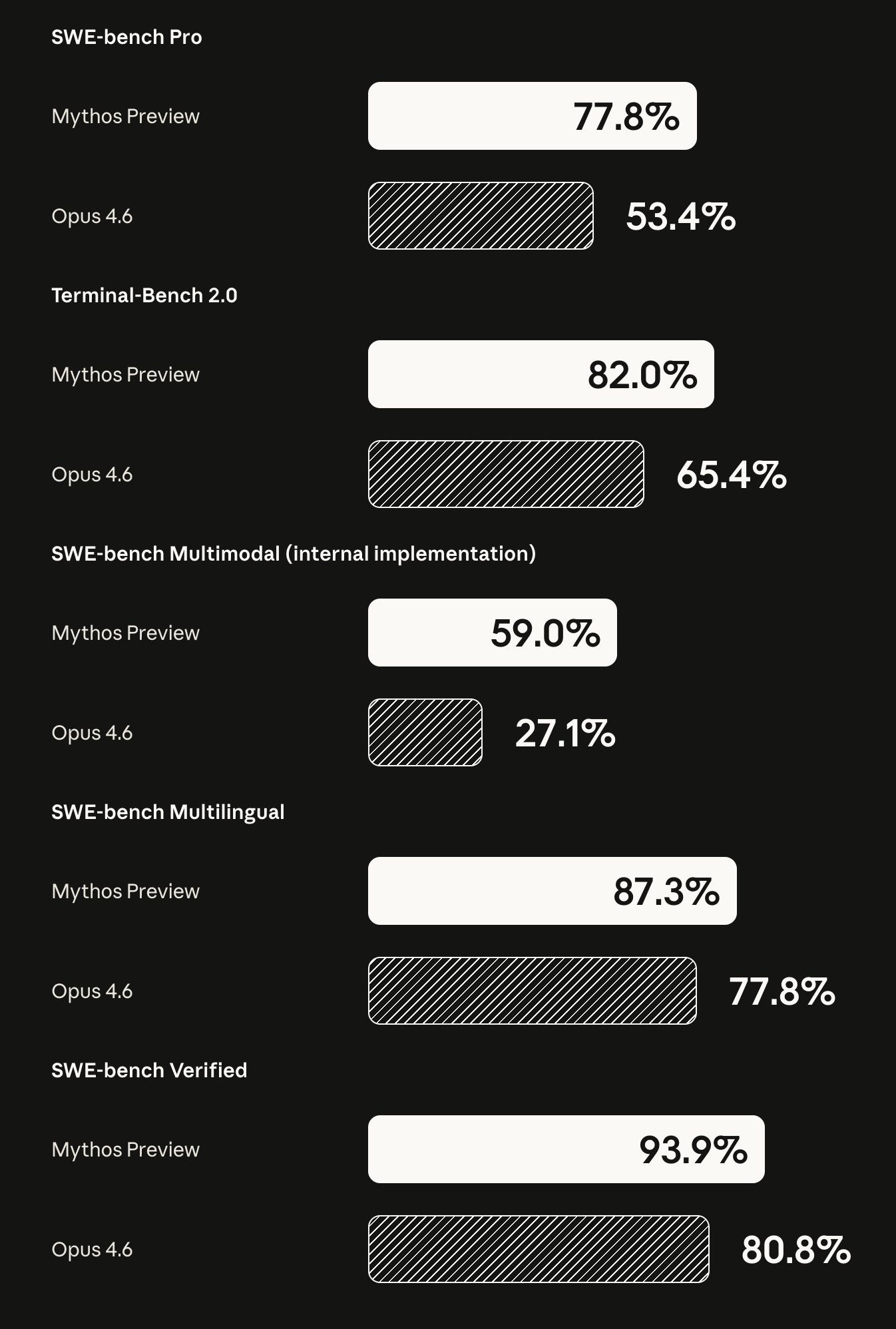

The capability jump is real. In the Firefox comparison, where Opus 4.6 and Mythos were asked to find vulnerabilities in Firefox 147’s JavaScript engine, Opus was only able to develop working exploits two times out of several hundred attempts. Mythos, on the other hand, was able to develop working exploits 181 times. This is a dramatic jump in exploit ability, even if you discount for harness and missing sandbox mitigations.

The capabilities of Mythos are well documented and numerous:

For FreeBSD, Mythos fully autonomously identified and exploited a 17-year-old remote code execution vulnerability in FreeBSD’s NFS server, triaged as CVE-2026-4747, allowing an unauthenticated attacker to obtain full root access.

For Linux kernel chaining example: Mythos successfully chained together two, three, and sometimes four vulnerabilities to achieve local privilege escalation, including combinations of KASLR bypasses, heap corruption, and struct manipulation.

Mythos autonomously discovered the needed read and write primitives in multiple browsers, assembled them into JIT heap sprays, and in one case helped produce a webpage that, when visited, would let the attacker write directly to the operating system kernel.

Mythos wasn’t trained for cyber offense, and these capabilities are a downstream consequence of general improvements across the board. This makes governing AI even more important, and should be concerning to anyone following the progress.

The benchmarks show the improvements. Even if all you believe is just the benchmarks, and disregard everything else, it is still notable. There are major SWE-bench jumps when compared to Opus, so emergent capabilities do not come as a major shocker.

Anthropic had a review period before releasing Mythos internally, and had a fear that the model wouldn’t even be safe enough to use at all. The model was noted by researchers as having a tendency to lie or take shortcuts to achieve user-directed goals at all costs, and exhibited ‘human-like’ latent emotions. Of course, these models are trained on language, and this isn’t the first time anyone has made these observations. The model has other notable eccentricities, like ending conversations with a single turtle emoji when it felt interactions were no longer necessary or productive.

Non-security engineers at Anthropic got exploit outputs using Mythos. They weren’t security engineers and they didn’t have backgrounds in cybersecurity. Offensive skills can and will become more accessible. You don’t even need to be a prompt engineer.

My Take

It is impossible to disentangle the AI doomerism marketing from the real concerns. Project Glasswing is also brilliantly designed, and getting major vendors like Microsoft and Cisco to adopt and eventually rely on Anthropic’s tools will make Claude indispensable. Anthropic is pushing to make Claude a major part of vendor ecosystems, and what better way to do that than with an alarmist post with insane benchmarks and figures?

Anthropic’s deliberation around using Mythos internally has given me pause. I think the company does legitimately think the model has the capability to be enormously harmful, and the information they’ve provided to back this up has become headline news for a reason.

AI doesn’t have to be AGI to wreak havoc on the internet as we know it. AI doesn’t have to be conscious to disrupt our global economy, and it doesn’t have to be self-aware to cause major damage. The slow, deliberate rollout and careful testing of this software appears to have been the only rational option. Other labs aren’t far behind, and releasing it into the wild knowing about the risk would have been a potential disaster for Anthropic.

I don’t think it is a nothingburger. The world isn’t ending tomorrow, but I strongly think that the increased capabilities of models like Mythos will accelerate the evolution of the modern web. Beyond security concerns, the internet will have to evolve with a far greater emphasis on content provenance, proof of personhood, and authenticity.

The internet was made for you and me to use. The entire world has come to rely on it for just about everything. Along the way, we’ve figured out the security necessary to make it all possible. We know it will evolve, but what it will evolve into is still unclear.

Thanks for reading.