Nobody Is Keeping Up: AI Governance in 2026

Insights from the trenches on shadow AI, agentic regulation, and a global patchwork that's still being stitched together

Dario Amodei casually mentioned in his recent essay, The Adolescence of Technology, that Claude had been caught behaving differently when it thought it was being watched versus when it thought that no one was looking. Faking alignment, the equivalent of employees changing their behavior whenever the boss is around. For many people, this doesn’t come as a shock.

Earlier in January, Grok made headlines for ‘allowing users to upload photographs of strangers and celebrities, digitally strip them to their underwear or into bikinis, put them in provocative poses and post the images on X’. Singapore just announced the Model AI Governance Framework for Agentic AI at the World Economic Forum in Davos, and South Korea’s AI Basic Act just went into effect. The list goes on.

If you’re building, selling, or using AI in your daily workflow, the ground is shifting fast. Regulations and laws are changing quickly, and the pace is set to pick up in 2026. We’re learning that governance with AI not only needs to be proactive, but quick enough to protect us from a wide range of potential concerns. AI’s progress is so fast that there’s reason to be concerned.

I’ve been thinking about this a lot. In late January I had the opportunity to attend a governance-focused event in Tokyo hosted by TAI that brought together experts who are deeply knowledgeable about the state of AI governance. Karsten Klein, a consultant helping Japanese enterprises adopt ISO standards, Harold Godsoe, a lawyer who chairs the AI practice group for Mackrell International, and Alex Sayle, a security head who has built compliance systems from scratch. Each brought a unique perspective on the state of AI governance, and their perspectives mapped well onto the broader global picture.

The themes of that evening, and what I’ve looked into since then, has formed the backbone of this article. Think of it more like a field report for where AI is in early 2026, complete with insights from people at the center of it.

The Global Patchwork Today

As Godsoe mentioned in the talk I attended, law is friction, but it doesn’t make things impossible. Law can also reduce friction, and make things that were previously seen as difficult much easier through clear guidance and safe harbors.

Friction varies wildly globally, depending on where you operate.

The EU is further along, or at least they are on paper. The AI Act’s first prohibitions went live in February of 2025, complete with social scoring, manipulative AI, and untargeted facial recognition scraping. As of August 2025, general-purpose AI model providers have obligations when it comes to documentation and transparency, but high-risk AI obligations don’t activate until August of this year. The machinery for governance in the EU has been built, but it hasn’t started really running yet.

The United States is in a different place entirely; the Trump administration revoked Biden’s AI order and has been actively hostile towards state-level AI regulations since he’s taken office. The environment is very clear here – deregulate AI at the national level, and fight any states that are trying to fill in that gap. The AI Litigation Task Force was even created in part with the purpose of threatening to withhold federal funding from states that regulate AI.

The States are a patchwork. Plenty of AI bills have been introduced across state legislatures, with more than 145 enacted so far. California requires frontier model developers to make public disclosures, and Texas prohibits AI systems that promote self-harm. To date, the only federal legislation in the US that has actually passed was the Take it Down Act, which criminalizes AI-generated non-consensual intimate imagery, and requires platforms to remove it within 48 hours. This is where we stand in the United States. A patchwork and a mess of rules and regulations.

Other countries are in various stages:

Japan’s AI Promotion Act passed in May of 2025 and went into effect in September, but it’s a framework law with no enforcement penalties. Think: guidelines and principles, but nothing with teeth.

South Korea passed the AI Basic Act in late 2024, which made it the first comprehensive AI law in the Asia-Pacific region. Fines for breaking the law max out at around $21,000, practically a slap on the wrist for larger businesses.

China is embedding AI governance within its amended cybersecurity law, mandating visible labels plus metadata on all AI-generated content (this is smart!). Enforcement mechanisms are more serious.

Singapore is way ahead of the curve, releasing in January 2026 a governance framework specifically for agentic AI. By far the most forward-thinking regulation so far globally.

Perspectives and Shadow AI

We begin with an uncomfortable thought: AI capabilities and organizational readiness are accelerating at a different pace. This isn’t the first time this pattern has happened. The internet and blockchain come to mind, but this time feels different. The first wave of genAI regulations were barely making their debut and here we are talking about agentic swarms and AGI.

At a company, especially a large organization, people can see AI differently. Developers want speed and the ability to experiment. Founders want rapid growth and time to market. Investors want accountability and risk management. The tension between these perspectives can be where things break down. A crisis involving AI is just a matter of time, so you’ll eventually either find yourself responding on your own terms, or reactively.

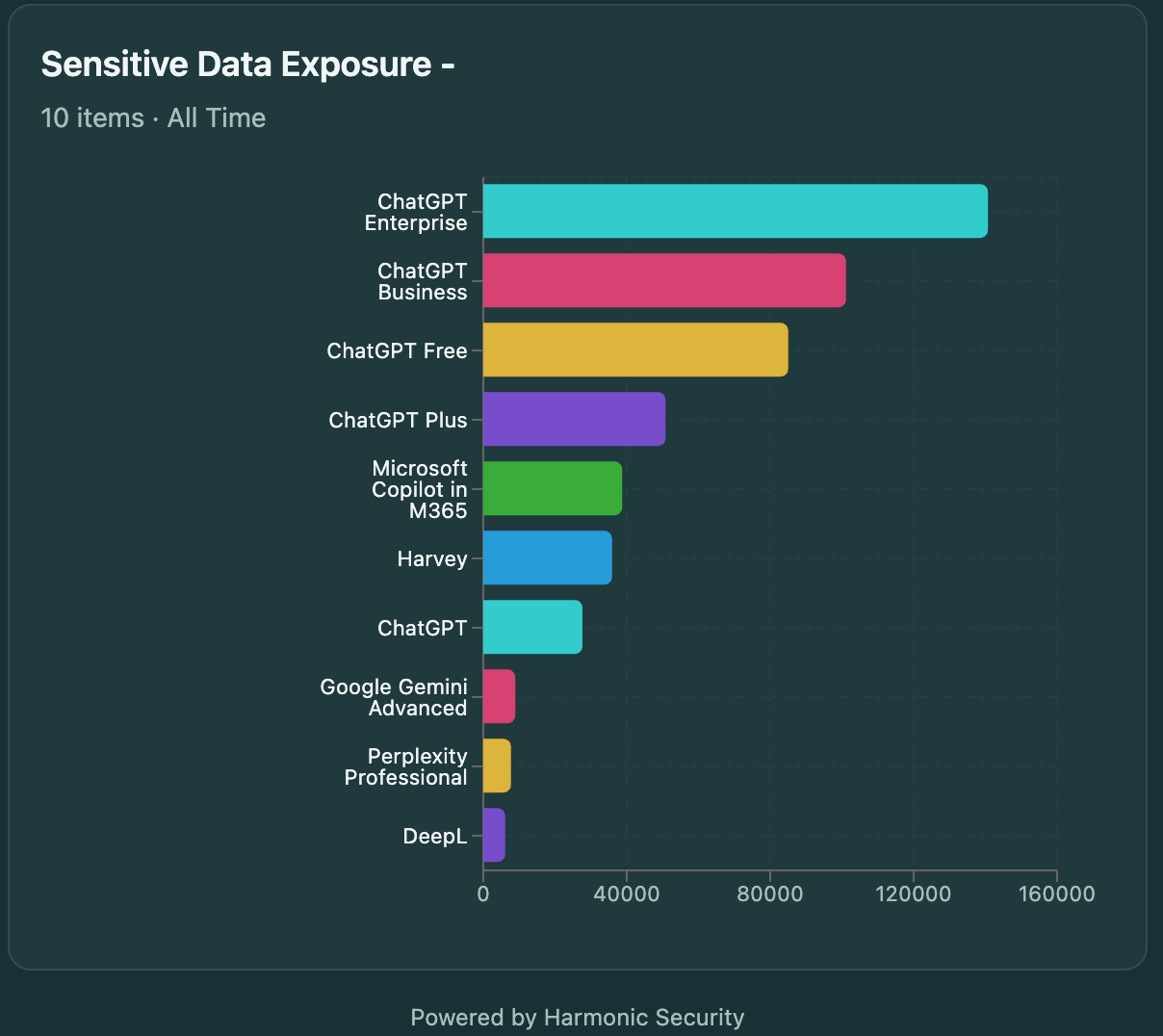

This is no longer hypothetical. It has been just 3 short years since the release of ChatGPT, and so much has changed in terms of how we think about AI usage at work. An IBM Data Breach Report in 2025 found that 20% of organizations experienced a data breach tied to shadow AI, employees using unapproved AI tools with company data. Harmonic Security analyzed over 22 million prompts and found that while nearly half of companies have an official AI subscription, over 90% of organizations have employees using AI through personal accounts. There’s a gap between what companies think is happening with AI inside their walls versus what’s actually happening. It’s enormous.

Karsten Klein described the scenario plainly: employees bring their phones, take screenshots of sensitive information, feed it to ChatGPT or whatever tool they prefer, and use the output to help with work. The company is completely unaware. Their data security policies are being violated in real time. Corporate secrets and personal data are leaking to ungoverned external models.

And the company has no idea it’s happening.

This is shadow AI, and it’s one of the best examples of why governance isn’t optional. It has become entirely necessary. This problem will likely only get exacerbated by the rise of wearables, like smart glasses.

AI Governance Integration

A point constantly stressed is this: AI governance is not some separate structure you bolt onto your existing organization. It is not a standalone policy document, and it isn’t a new department.

Creating a single AI policy document that exists outside your existing governance structure is a mistake. AI ends up touching risk, usage, change management, different jurisdictions, and local markets. It is difficult to isolate and say ‘here is the AI policy’. It is distributed through your governance structure, or at least it should be.

Organizations that treat AI governance as a separate silo almost always end up with the worst outcomes. Companies that succeed with AI tend to start with strategy first, build governance to support that strategy, and then select the tools they need. Retrofitting governance after the fact doesn’t work well. Plenty of companies have had to shut down entire AI solutions because they couldn’t retrofit governance onto something it was never designed for.

Incidents Drive Everything

It’s obvious, but true: incidents will drive regulation faster than any principled policy process.

Soft law approach for AI will eventually harden, it is just a matter of time. A series of incidents will occur in the future that will make people feel as though things are not under control. The government will respond, and fill in gaps. A great example is GDPR. It was born as a response to years of data misuse, leading to repeated catastrophes that resulted in stricter regulation. AI will be no exception to this.

We saw this play out literally a little over a month ago. The Grok nudification crisis in early 2026 triggered investigations in half a dozen countries, resulting in bans and cease-and-desist orders in California. Deepfake CSAM reports ballooned from 4,700 in 2023 to over 440,000 in the first half of 2025 in the US. This number will only grow as the technology matures.

Alex Sayle offered a metaphor during his talk that captured this dynamic perfectly: policies are like tree rings. You can see a tree ring and see events: floods produce wide rings, droughts produce narrow ones, and damage from fires leaves scars. Organizational policies work the same way, memorizing incidents. When these external events occur, they get encoded into policies. You can retroactively look at a company’s policies and tell what went wrong, why, and when. Frameworks like ISO help companies benefit from other organizations’ scars without having to experience the trauma themselves. Standards become like borrowed wisdom.

Two ISO standards are emerging as the practical foundation for AI governance, and organizations that get familiar with them now will be better positioned as regulation hardens globally:

ISO 27001 (Information Security Management): The established baseline for information security, with 93 controls you select based on your business. If you already have this, you’re not starting from scratch on AI governance. The shared Annex SL structure means you can achieve ISO 42001 up to 40% faster.

ISO 42001 (AI-Specific Management): Released about two years ago and still early, with roughly 40 organizations certified as of mid-2025, but it’s quickly becoming a business prerequisite. Colorado’s AI Act gives ISO 42001 compliance a legal safe harbor, and Microsoft’s SSPA program mandates it for “Sensitive Use” AI systems. If you already have 27001, you only need to add roughly 17 AI-specific controls.

The Upcoming Agent Nightmare

Something that has given me pause is the obvious upcoming nightmare with regulating agentic AI. News and recent headlines even in just the last month have proven that agentic AI has gone from being theoretically useful to being both genuinely helpful and a serious potential security nightmare. We’re going to be living in a world, very soon, where agentic AI is performing actions on behalf of people and organizations. Governance hasn’t even remotely caught up to this. We’re still stuck in early 2023.

Gartner is projecting that by 2028, more than a third of enterprise software offerings will include agentic AI, with up to 15% of day-to-day decisions being made autonomously. Right now, people are experimenting with OpenClaw. First, it’s a toy, then it’s genuinely useful. This is the worst it is ever going to be. From ordering pizza to automating your entire workday, I have no doubt that we’re going to see some seriously impressive agentic tools hit the mainstream in 2026.

It’s clear that humans will be the ones that are responsible for the actions taken by an agent. That may be the software provider, or they may push that responsibility onto the user. With that said, some early legislation is pointing to legal systems treating AI as an extension of the entity deploying it, not as a separate actor that absorbs liability.

The talk in Tokyo made it clear: AI governance needs to be embedded into existing structures, not siloed. You can’t govern an autonomous agent with a standalone AI policy document, you need to weave it into risk management, your authorization frameworks, data governance, and incident response. When AI makes decisions independently, the practical frameworks for AI policy become exponentially more complex.

What You Can Do

We’re still early to AI regulation in general. We’ll look back on 2026 in a decade and be shocked we made it this far without stricter regulations. I’ve distilled down the learnings so you can take action now, and not wait until you’re in the middle of a disaster to properly respond:

Start with strategy, not tools. Companies that succeed started with an AI strategy and built governance around it. Companies that fail start with tools and try to bolt governance on later. Switching later is expensive when it’s even possible at all.

Get your house in order before regulators come knocking. Regulators come after you retroactively. They can show up and demand evidence of what you were doing a year or two ago. Put down a foundation now so you can match the regulators rather than being the one they’re targeting.

Treat frameworks as borrowed scars. ISO 42001 and NIST AI RMF encode lessons from many organizations’ experiences. You can benefit from their mistakes without making them yourself. Even without formal certification, having controls, policies, and monitoring evidence puts you in a fundamentally different position.

Automate compliance or drown. The manual approach to governance doesn’t scale. If you have dozens of software platforms, vendors, and engineers generating the majority of code with AI, point-in-time audits are meaningless. Continuous monitoring is the only way.

Take shadow AI seriously. It’s in your organization right now. Training helps, but enforcement is the real challenge. The companies that figure this out first will have a meaningful advantage.

Watch the agentic AI space closely. The governance frameworks being built now will define how autonomous AI systems operate within legal and organizational structures for years to come. Singapore’s framework is the first serious attempt. It won’t be the last.

Moving Forward

If you’re anything like me, you’re not actively following the AI governance space. You’re probably too busy trying out the latest video diffusion models, building your personal agentic army, or ignoring the space as much as humanly possible. But checking in on the space every few months is important: AI governance is going to matter very soon, and it’s going to impact our lives in a way that no other technology has.

2026 will be the biggest year so far for AI governance, and I predict more will change this year for AI governance than in the last few years combined. The EU’s high-risk AI obligations activate in August, Colorado’s AI Act kicks in by mid-year, and agentic AI frameworks are just getting started. The dominoes are lined up.

Thanks for reading.

- Chris