AI Brain Fry Is Real

On the human interface problem with agentic AI

I hit a wall last Wednesday.

I had three Claude Code sessions running in Ghostty in separate tabs, with an Antigravity agent revising specs, while debugging an APK that was failing to compile in Android Studio. At that same time, I had Gemini and ChatGPT both running deep research on a topic I was considering writing about, and Cowork updating my personal docs via MCP in Notion.

This reality is a new one. In the span of just a couple of months, I’ve found myself constantly shifting between tabs, spending no more than a few minutes on any single task before inevitably launching something in parallel. It’s addictive, but I also feel like I’m still not doing enough. If I don’t have multiple agents or AI programs running during the daytime, I feel like I’m missing out. New questions emerge when you work like this, and the next thing you know you’re running Claude Code remotely so you can control it from your phone, or chatting with your Zo Computer via Telegram to update tasks that aren’t formatted exactly like how you’d prefer.

At any given time, I’m using up to six different programs for generating code or iterating through ideas, although this can vary wildly. ChatGPT, Gemini, Claude Code and Cowork, various models via OpenRouter, Zo Computer, and my personal OpenClaw. Although Zo has helped consolidate interfaces, I still jump between them during work sessions.

This is leading to a new type of brain fry I’ve never experienced while working, and I know I’m not the only one. This is constantly being mentioned on X, and it’s a new type of fatigue.

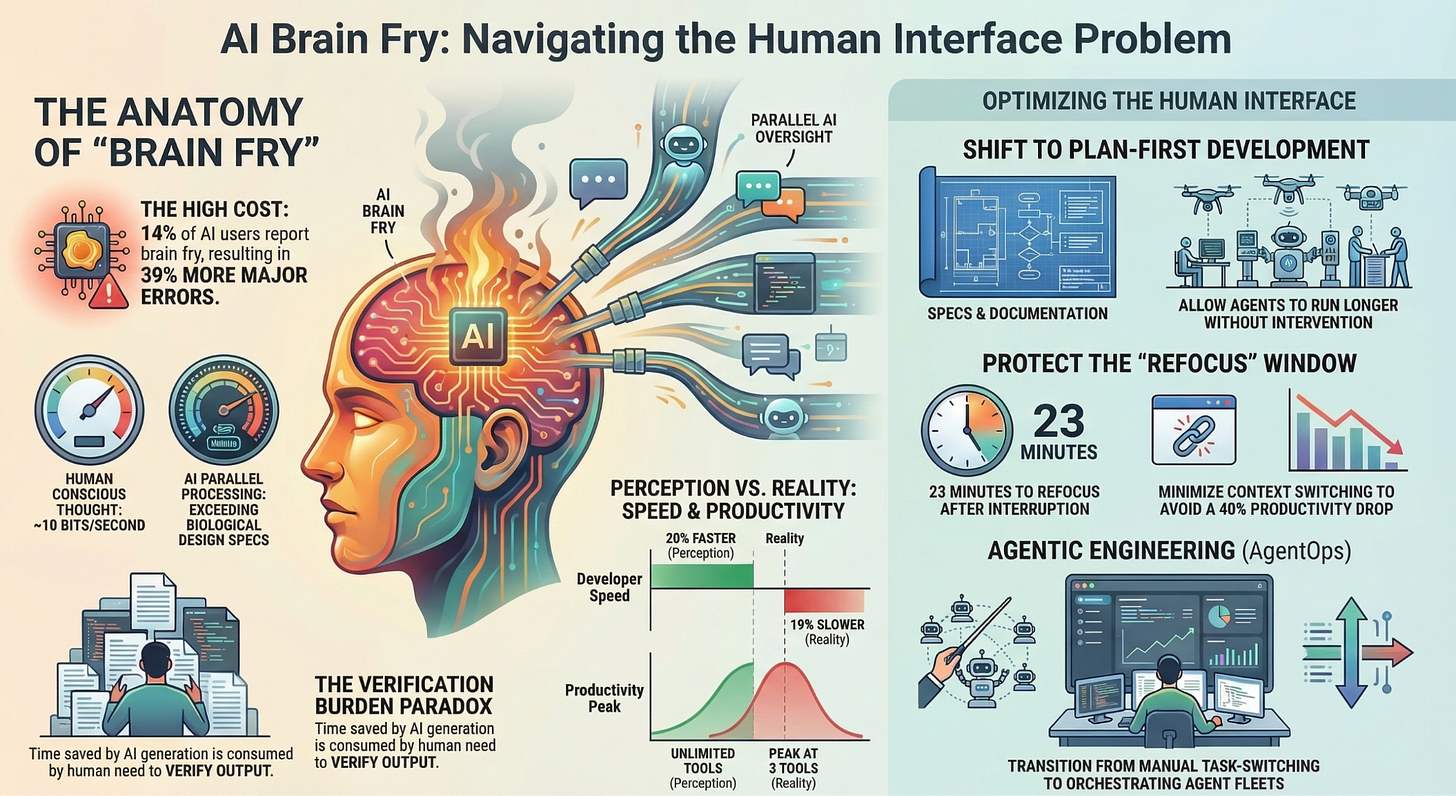

Turns out, the experience has a name. AI brain fry is mental fatigue from excessive use or oversight of AI tools beyond one’s cognitive capacity. This is happening more now for people in 2026 because of the proliferation of agentic AI, with everyone and their grandma trying to build out agent swarms and teams of agents working together in parallel.

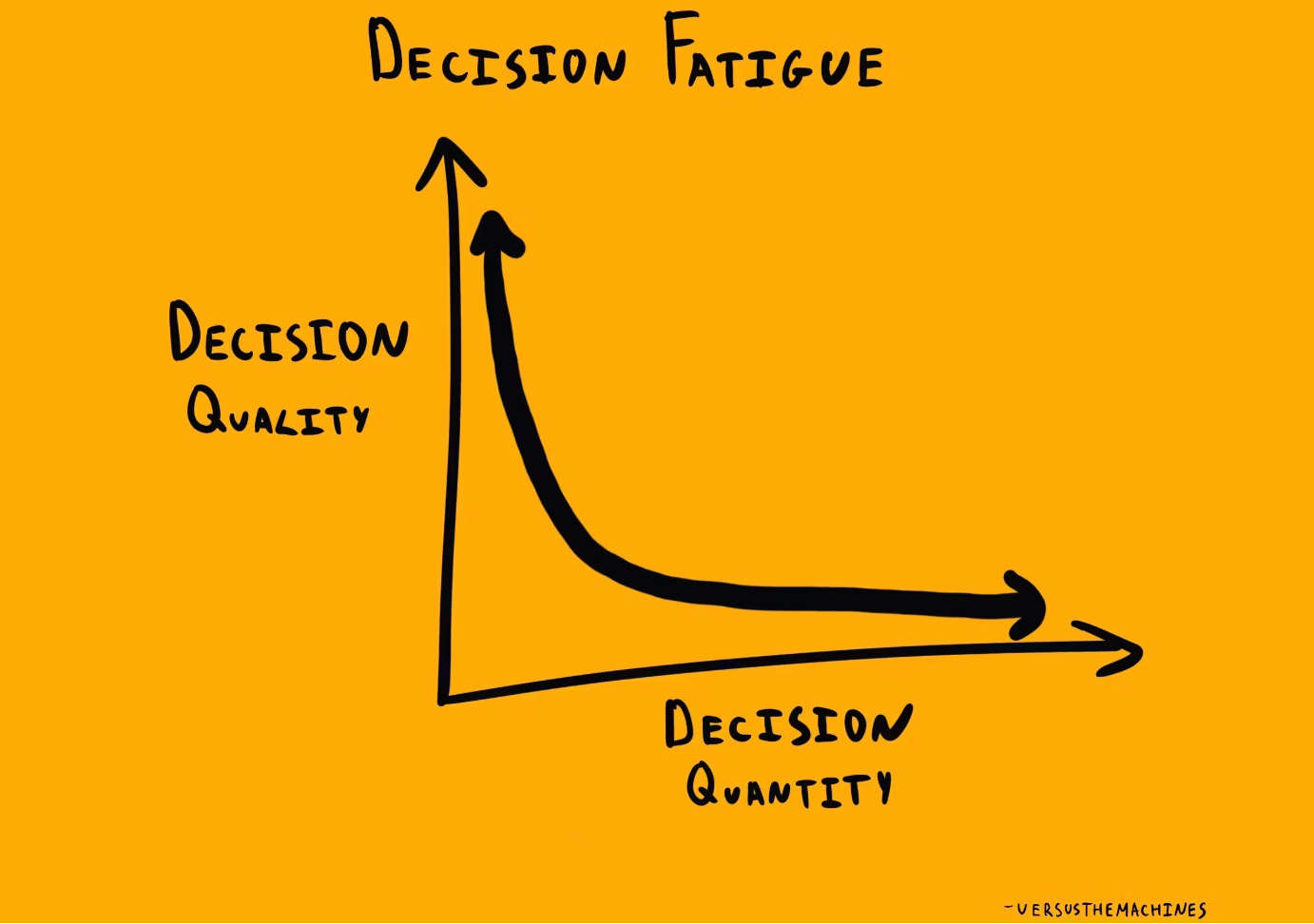

We’re all familiar with decision fatigue, but the new paradigm for interacting with this technology has redefined what that can look like. It seems obvious, but more constant context switching leads to more errors for humans. Boston Consulting Group and Harvard Business Review published a rigorous study on this in March 2026, surveying 1,488 full-time U.S. workers. 14% of AI users report brain fry. Those experiencing it show 33% higher decision fatigue and 39% more major errors.

This is becoming a universal experience for people using agentic AI. Brain fry is setting in fast, and context switching is slaughtering the ability to do sustained and thoughtful work. Clearly levels of AI usage can be productive, but we’re starting to see that the cons are far outweighing the pros. Our brains can’t keep up with the output and the tech.

Welcome to the human interface problem.

The Moving Bottleneck

For decades, the hardest parts of software were turning ideas into working code and polishing it up for usage. AI agents have obliterated that constraint. Y Combinator’s Winter 2025 cohort included startups with codebases 95% AI-generated. At Anthropic, roughly 90% of Claude Code’s own codebase was written by Claude Code, with Boris Cherny managing five or more simultaneous workstreams and shipping 20 to 30 pull requests daily.

High AI-adoption teams have seen both pull request sizes balloon as well as code review times surge. More AI-adoption means longer reviews, bigger PRs, and more bugs to find and fix. Knowledge workers are claiming (and experiencing) that AI creates more coordination work.

Work is changing. In the span of what feels like less than a year, software has shifted from being generative (humans primarily producing code) to evaluative (humans reviewing AI and human-generated code). If the bottleneck of writing ‘good enough’ code is removed, then curating thoughtful output and product-orientation matter even more.

Hongbo Tian summed this up super well: “When you wrote 50 lines, you lived through every decision. When AI generates 500 lines, you’re reading someone else’s novel and trying to spot the plot holes, while the author is a system that has no concept of plot.”

Creating can be energizing. Reviewing, by contrast, is draining. Evaluative work gives you decision fatigue, and decision fatigue sucks.

For our collective sanity, we need a change.

A recent Caltech study found that conscious human thought only processes at roughly 10 bits per second, despite our sensory systems gathering ~one billion bits per second from the environment. We think sequentially, not in parallel. If you’re running a few agentic coding tasks at the same time, you’re exceeding your brain’s natural design specs. You might not be generating the code, but managing the fast work being done in-parallel naturally exceeds your working memory capacity.

Task switching compounds this problem. Research at UC Irvine indicates that it takes about 23 minutes to fully refocus after an interruption, with task switching reducing productivity by up to 40%. Then there’s attention residue, where you’re never fully committed to one task before jumping to the next one. This context switch creates drag that accumulates throughout the day.

The problem with the current state of AI is that you have to keep context switching to move things forward. Setting it and forgetting it just doesn’t work. Reviews have to happen, and that includes redirecting, approving, and reorienting. Your cognitive performance is being destroyed by the workflow that is required, which results in you becoming even worse at the tasks you need to perform to keep the system functioning.

Brain fry is real. If agents produce things nearly instantaneously, the human in the loop becomes the bottleneck. Agents generate outputs that bleed into whatever is left of personal time, and projects expand too quickly for you to truly understand how everything is built and wired.

The effects of brain fry are significant. Workers with brain fry commit more errors, and are getting increasingly burnt out from their jobs. Workplace fulfillment drops, and higher usage of AI is associated with increased burnout risk.

There’s a long list of AI paradoxes, and it is becoming clear that many of the paradoxes of automation apply when thinking critically about AI and where the usage of AI is heading when it comes to work.

Oh, The Paradoxes of AI

Much of this is nothing new. There are well-documented paradoxes that emerge when you put humans in oversight roles over automated systems. Understanding this is central to understanding how humans, today, realistically interface with machines in the workplace.

The Ironies of Automation (Bainbridge, 1983)

Automation tends to leave humans with the hardest tasks while removing the easy ones.

The system handles routine work, then surfaces the rare, high-stakes, ambiguous cases to the human

Because the human has been out of the loop during routine execution, they have less context when they’re called in

In agentic AI: the agent handles 95% of implementation, then the human gets the 5% that went wrong, with a secondhand mental model of the codebase

Bainbridge wrote this about industrial automation 43 years ago. It maps perfectly onto Claude Code and Cursor in 2026

The AI Productivity Paradox

People feel dramatically more productive with AI than they measurably are.

METR’s randomized controlled trial: across 246 real tasks, developers using AI were 19% slower on average, yet believed they were 20% faster. A 39-point perception gap

A February 2026 update revised the slowdown to roughly 4%, but the perception gap persisted

Philipp Dubach’s synthesis of six independent research efforts: at 92.6% monthly AI adoption and 27% of production code being AI-generated, organizational productivity gains amount to roughly 10%

Daron Acemoglu (MIT, 2024 Nobel laureate) projected a 0.5% total factor productivity increase from AI over the next decade. This is worth noting.

Automation Bias

The more you trust the agent, the less you verify. The less you verify, the more errors compound.

Systematic reviews document errors of omission and commission from over-reliance on decision aids

Time pressure pushes humans toward deferring to AI rather than independently re-evaluating

Particularly dangerous because agents are right often enough to train complacency for the cases when they’re wrong

In judicial and medical contexts, this pattern is already flagged as a serious institutional risk

The Critical-Thinking Paradox

The skills needed to oversee AI degrade the more you rely on AI.

Empirical work links frequent AI “cognitive offloading” with reduced critical thinking scores

The more the system fills in reasoning, the more the human must actively preserve skeptical evaluation habits

Without that active preservation, oversight collapses into rubber-stamping

The skills atrophy at the same time the need for them intensifies

The Verification Burden Paradox

Time saved by AI generation gets consumed by human verification. This is the big one.

Foxit’s March 2026 report: executives believed they saved hours with AI document generation, but spent nearly as much time reviewing outputs. Net gain on the order of minutes

Cursor CEO Michael Truell: “Cursor has made it much faster to write production code. However, for most engineering teams, reviewing code looks the same as it did three years ago”

The BCG/HBR study found three tools is roughly the productivity peak. Beyond four, productivity declined.

The Output Paradox

If you have automation that saves time, you don’t end up saving time. You create more output. Then the bar is raised.

AI makes everyone more productive, then expectations scale to match the new capacity

HBR made it explicit in February 2026: AI doesn’t reduce work, it intensifies it

Mike Manos, CTO of Dun & Bradstreet: “I got the eight hours to two hours, but now I can get 20 hours of work”

This is itself a paradox: the tool designed to reduce workload becomes the mechanism for increasing it

Agent Runners and Workflow Design

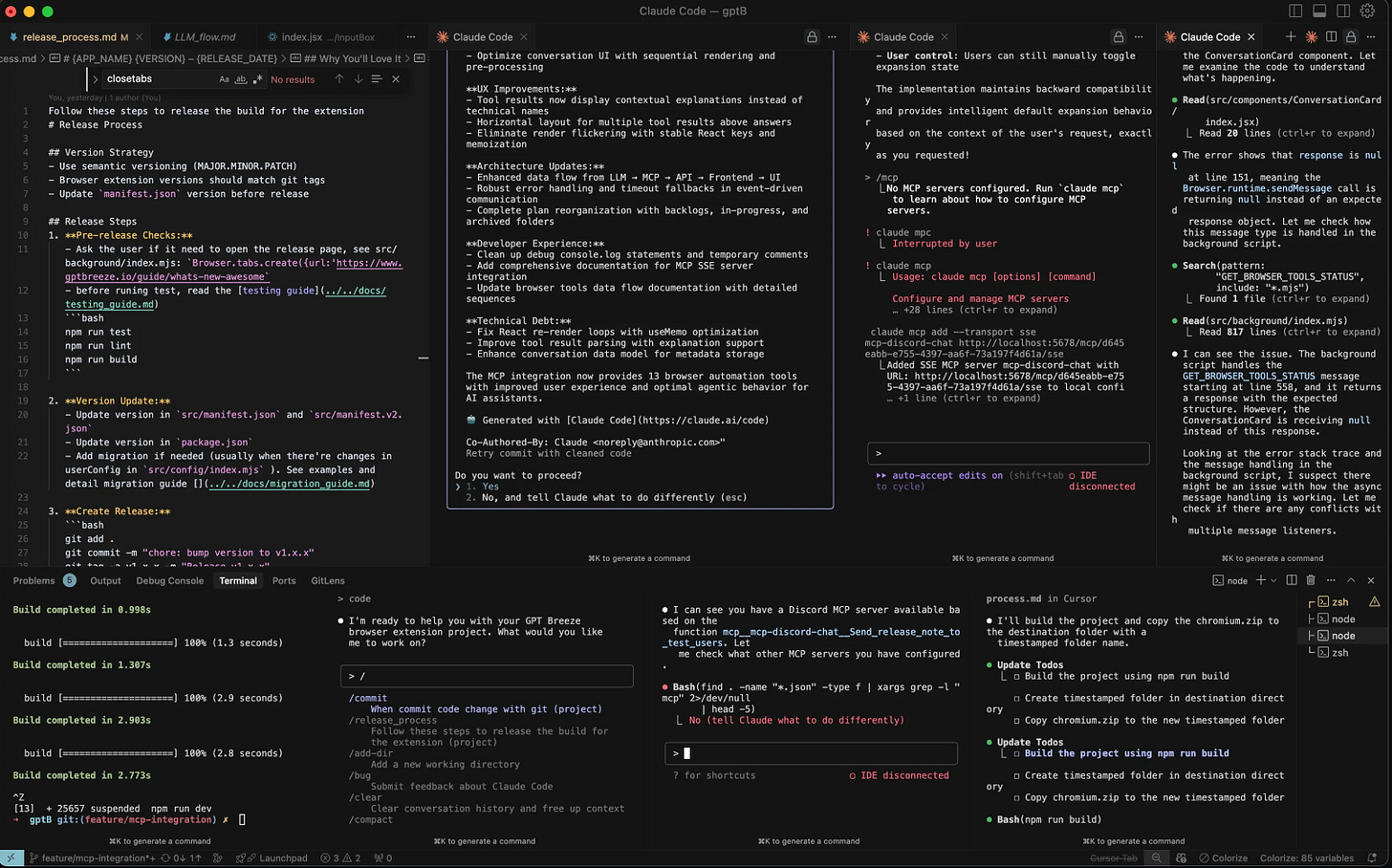

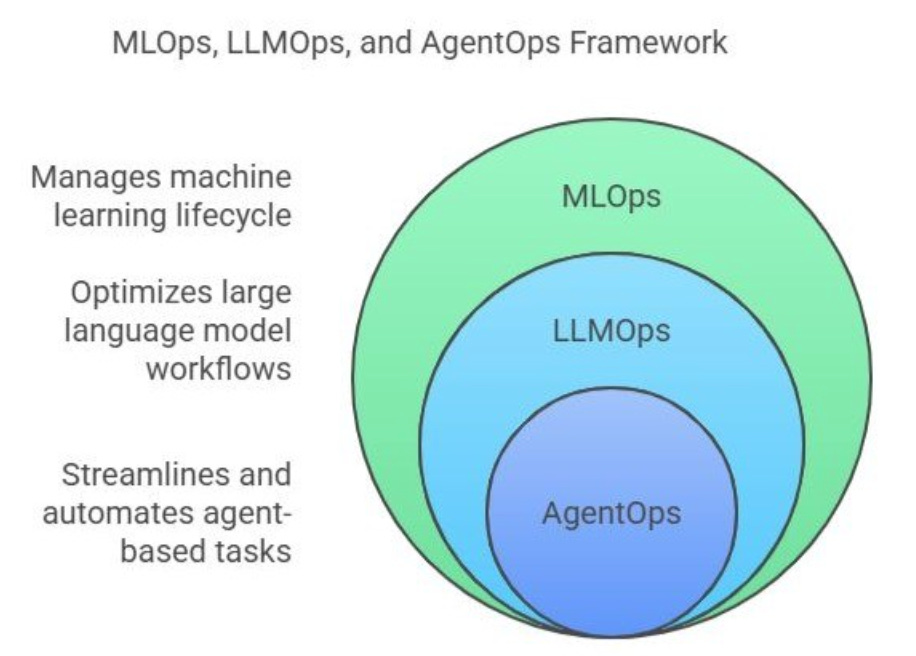

Although Andrej Karpathy coined vibe coding last February, now he prefers the term agentic engineering. The new role showing up in the industry is the ‘agent runner’, and the operational discipline being built around it is AgentOps: orchestrating, monitoring, and managing the lifecycle of agent fleets.

Context Engineering has emerged as a core competency for this new role:

Writing specs and prompts that give agents the right info at the right time

Structuring project docs, rules files, and memory systems so agents can operate as independently as possible

Scoping the boundaries of what an agent should and shouldn’t do within a given task (or their role)

Curating and maintaining a knowledge base agents can draw from (like MDs and Notion).

What is becoming increasingly clear is that the more you plan ahead, and the more accurate documentation you provide to agents (within scope) the longer you can run sessions without human intervention. That’s the best case scenario, but there’s a reason why the space is emphasizing spec-driven development. Alongside Claude Code, installing things like GSD or Superpowers is what many in the space are doing to keep agents running longer and longer without fear of context drift.

This is what some are referring to as a plan-first development model. Keeping files that accumulate and note every learned mistake as a rule is key. Batch prompting is also a variant of this: spending hours iterating and planning first, and then launching agents and leaving the computer. Ideally, this allows you to batch cognitive load for yourself, rather than fragment yourself across the day responding to agents and reorienting over and over again.

The key here is, protect human attention instead of fragmenting it.

It comes as no surprise, then, that there’s intense interest now surrounding the usage of fleet management and agent lifecycle tools. Essentially, unified command centers to manage fleets of agents. There’s no leader, yet, but new Github projects crop up daily for how to visualize agents and manage them. Still, unless you’re comfortable with agents managing agents, you still need to manually read and review output on a regular basis to keep the ball rolling.

Personally, I’m excited for Claude Code to expand functionality for managing agents, and curious to see how smaller projects like Builderz evolve.

Either fix the human interface problem with better software, or augment your human wetware.

Biohacking in 2026

At present, there’s really two overlapping schools of thought for managing increased workloads with AI: either make better dashboard and tools for managing, or biohack your way to cognitive superiority. I think dabbling with both is what many people who are serious about the space are going to do, and I think it is worth touching on.

We’re all aware of Modafinil, which has been in use in Silicon Valley for years as a cognitive enhancer. But the space has shifted beyond this, and it isn’t as discussed as you might think or expect online.

2026 Human Wetware Upgrades

Creatine: 5-10 grams daily for improvements in memory, attention, processing speed, and handling sleep deprivation.

Lion’s Mane: Helps with fostering BDNF and NGF. Widely popular alone or as part of a large mushroom stack.

Alpha-GPC: boosts acetylcholine acutely, supporting processing speed and sustained attention during complex task-switching.

Bacopa monnieri: has traditional and some clinical support for memory enhancement.

LSD: Microdosing on a regular basis is something the tech space has done for decades, but it remains popular because people swear by its effectiveness at fostering divergent thinking.

Peptides

Peptides are a growing category of interest for cognitive enhancement, though the regulatory landscape is complicated. These are short chains of amino acids that can influence biological processes including cognition and recovery. Here’s a small snippet of them.

BPC-157 (The Wolverine drug): Widely discussed in biohacking circles for recovery and gut-brain axis benefits. Most evidence is preclinical, and the FDA flags safety concerns with compounding peptides.

Semax: Approved for clinical use in Russia since the 1990s. Used for cognitive enhancement and neuroprotection. Shows moderate evidence but lacks Western-standard clinical trials, but people are taking it anyways.

Selank: Also approved in Russia. Used for anxiety reduction and cognitive support. Similar evidence profile to Semax but less widely used.

Obviously common wellness and vitamin routines persist, with omega-3 and magnesium being crucially important for heart health and sleeping soundly. But the nootropics space has exploded in popularity in 2026, at the same time that we’re staying up all night running agents.

Coincidence? I don’t think so.

Where We’re Heading

The human interface problem is largely structural. We’re working against our biology. The numbers on how our brains work are not changing anytime soon. We still need sleep, and we still need to eat. Working memory cannot be expanded in a meaningful capacity by any supplement, and it still takes the same amount of time to recover from context switching. Peptides can only do so much.

The human capacity to direct, evaluate, and integrate outputs generated by AI remains biologically fixed. At the same time, companies are cutting headcount and organizations are flattening. The amount of output a single person can generate using these tools is increasing rapidly. Either we slow down, or develop new methods to manage the human bottleneck.

The only path forward is designing workflows around human cognitive limits, rather than pretending they don’t exist. Planning, batch prompting, and fleet dashboards are a start. At this point, people still need to be in the loop, and that isn’t changing anytime soon. Even if you fully scope out a project and write out a ton of documentation, there’s a huge difference between what AI says it is going to do and what it ends up outputting, especially when it comes to UI and design. Humans are still the tastemakers.

Now the constraint isn’t technology. It’s us. The sooner we design for systems that enhance and preserve human flourishing and sanity, the better.